2. 南京理工大学 自动化学院, 南京 210094

2. School of Automation, Nanjing University of Science and Technology, Nanjing 210094, China

目前,无人飞行器的导航系统已经日趋成熟,也越来越多地应用于军事、民用、农业等各个领域。针对室外环境下的导航系统,主要采用全球定位系统(Global Positioning System, GPS)和差分全球定位系统等导航方式,可以实现快速准确的导航功能。而对于室内环境,由于建筑物会对GPS信号进行屏蔽,所以需要借助多种传感器融合的方式实现无人飞行器室内导航功能。其中多传感器数据融合技术是实现无人飞行器室内导航功能的基础[1-3]。随着数据融合算法的不断发展,多传感器数据融合技术正在不断朝着智能化方向发展。

对于多传感器数据融合的方法,工程上主要利用惯导、激光、摄像头等传感器,结合同步定位与地图构建(Simultaneous Localization and Mapping, SLAM)算法进行多传感器数据融合,获取准确的位姿信息,进而实现自主定位和导航功能[4-5]。目前SLAM方法大致可分为两类:一类方法主要基于概率模型,以Kalman滤波为基础的SLAM算法都属于概率模型法,如压缩滤波、FAST SLAM、完全滤波等。另一类为非概率模型方法,其中包括有SM-SLAM、扫描匹配等[6]。SLAM算法的大体流程基本相似,首先将通过传感器得到的环境信息转化为环境特征量,然后根据机体自身位置解算并记录每个环境特征量在地图中的位置。当机体再次进入所创建的地图区域时,根据当前检测到的环境特征量与地图中的环境特征相匹配,进而判断机体在地图中的位置信息。SLAM算法的研究重点在于环境特征的表示、数据关联以及观测误差、位姿解算误差和错误的数据关联带来的积累误差。采用扩展卡尔曼滤波(Extended Kalman Filter,EKF)、粒子滤波(Particle Filter, PF)等方式可以有效提高地图创建的精度和鲁棒性。相关研究在地面机器人领域早已开始并取得丰硕成果[7-10]。然而室内无人飞行器自由度较多,载荷较小,可以负担的传感器数量有限,这使得获取位置信息的精确度更加依赖于环境特征的提取和匹配,但是对于复杂的室内环境往往环境特征是变化的,同时也存在传感器自身故障引发的环境特征不可靠问题,这给SLAM算法中数据融合的可靠性带来了新的难题和挑战[11-15]。

RBF(Radial Basis Function)神经网络的优点主要表现在可以拟合任意的非线性函数,并且利用正则化理论解决了其中存在的不适定问题,具有良好的泛化能力、有很快的学习收敛速度,已成功应用于时间序列分析、非线性函数逼近、系统建模、数据分类、信息处理、模式识别、图像处理、控制和故障诊断等[16-17]。

综上所述,本文主要采用RBF神经网络辅助的多种数据融合技术组合的导航方式,利用RBF神经网络的预测和补偿功能,为环境特征信息不可靠情况下的导航系统提供误差补偿,避免无人飞行器因为导航数据不可靠而发生严重的飞行事故,为无人飞行器室内导航系统的智能化发展奠定了基础。

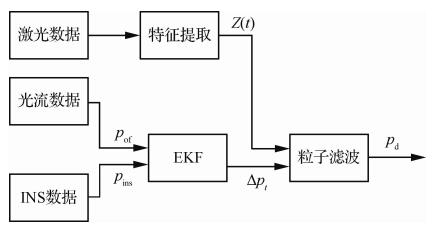

1 EKF/PF室内组合导航系统目前使用的室内组合导航系统主要采用多种滤波方式组合的多传感器数据融合方法,本文主要使用的滤波算法为EKF和PF,传感器为惯性导航系统(Inertial Navigation System,INS)、光流传感器和激光传感器。EKF/PF室内组合导航系统的结构如图 1所示,其中pof、pins分别为光流传感器和INS得到的位置坐标,Δpt为从t-1时刻到t时刻由EKF得到的位置变化量,Z(t)为利用激光数据获取的环境特征量(包括直线、直角特征等),pd为最终输出的位置估计值。

|

| 图 1 EKF/PF室内组合导航系统结构 Fig. 1 Structure of EKF / PF integrated indoor navigation system |

该结构主要分为两部分,第一部分由EKF对INS以及光流数据估计得到的位置信息进行滤波,得到次优的位置信息[18]。第二部分将得到的次优位置信息和通过激光数据得到的环境特征信息进行粒子滤波,进一步去除过程噪声,减小过程累积的误差。

首先进行EKF设计。在实际的系统和传感器中,其模型不是绝对线性的,但却都是收敛的。因此在非线性问题中运用EKF更为合适。EKF是一种将协方差和均值线性化的方法[19-21], 其主要包括预测和更新两个步骤。

第一步:预测。

| $ {{p_{{\rm{ ins}}{\rm{. }}t}} = \mathit{\boldsymbol{ \boldsymbol{\varPhi} }}{p_{{\rm{ ins}}{\rm{. }}t - 1}}} $ | (1) |

| $ {{p_{t,t - 1}} = \mathit{\boldsymbol{ \boldsymbol{\varPhi} }}{p_{t - 1}}{\mathit{\boldsymbol{ \boldsymbol{\varPhi} }}^{\rm{T}}} + \mathit{\boldsymbol{Q}}} $ | (2) |

第二步:更新。

| $ {{H_t} = {p_{t,t - 1}}{\mathit{\boldsymbol{C}}^{\rm{T}}}{{({\mathit{\boldsymbol{C}}_p}_{t,t - 1}{\mathit{\boldsymbol{C}}^{\rm{T}}} + \mathit{\boldsymbol{R}})}^{ - 1}}} $ | (3) |

| $ {{p_t} = {p_{t,t - 1}} + {H_k}({p_{{\rm{of}},t}} - \mathit{\boldsymbol{C}}{p_{{\rm{ins}},t}})} $ | (4) |

| $ {{p_t} = (\mathit{\boldsymbol{I}} - {H_t}\mathit{\boldsymbol{C}}){p_{k,k - 1}}} $ | (5) |

式中: pins, t、pof, t分别为t时刻INS和光流得到的位置信息;Φ为转移矩阵;pt, t-1为利用上一时刻的误差协方差估算得到的t时刻的误差协方差;Q、R分别为过程噪声和量测噪声的方差矩阵;Ht为t时刻的卡尔曼增益;I和C为单位阵。

选取粒子滤波器作为进一步减小累积误差的滤波方式。粒子滤波的中心思想是基于蒙特卡洛法,利用粒子集合表示概率,可以通过计算粒子集合的样本均值来估计系统参数,对比扩展卡尔曼滤波等滤波方式,粒子滤波具有不受噪声模型和系统模型限制的优势(可以处理非高斯噪声、非线性模型),其理论上精度也要更高[22-24]。

首先,建立系统状态转移方程:

| $ \left\{ {\begin{array}{*{20}{l}} {{\psi _t} = {\psi _{t - 1}} + \Delta {\psi _t}}\\ {{x_t} = {x_{t - 1}} + {\rm{cos}}{\psi _t}\Delta {x_t} - {\rm{sin}}{\psi _t}\Delta {y_t}}\\ {{y_t} = {y_{t - 1}} + {\rm{sin}}{\psi _t}\Delta {x_t} + {\rm{cos}}{\psi _t}\Delta {y_t}} \end{array}} \right. $ | (6) |

式中: X(t)={ψt, xt, yt}分别表示t时刻的状态量,即航向角和横、纵坐标;Δψt、Δxt、Δyt分别表示从t-1时刻到t时刻的差值。

同时建立观测方程:

| $ Z(t) = h(X(t)) $ | (7) |

式中: h(X(t))表示t时刻状态量与环境特征值之间的函数关系。

接下来生成初始状态量粒子集X0i(i表示总粒子数,1≤i≤N),由于规定初始位置是(0, 0),所以在初次选取粒子时,粒子选取范围为

| $ \left\{ {\begin{array}{*{20}{l}} { - {5^\circ } \le {\psi _0} \le {5^\circ }}\\ { - 2{\kern 1pt} {\kern 1pt} {\kern 1pt} {\rm{cm}} \le {x_0} \le 2{\kern 1pt} {\kern 1pt} {\kern 1pt} {\rm{cm}}}\\ { - 2{\kern 1pt} {\kern 1pt} {\kern 1pt} {\rm{cm}} \le {y_0} \le 2{\kern 1pt} {\kern 1pt} {\kern 1pt} {\rm{cm}}} \end{array}} \right. $ |

根据由光流传感器和INS经过扩展卡尔曼滤波在t=1时刻得到的Δψ1、Δx1、Δy1,可以通过状态转移方程得到t=1时刻的状态量粒子。

根据Z(t)=h(X(t)),可以得到Z1i,即第i个粒子在t=1时刻对应的特征值。对于每一个粒子得到的特征值,利用传感器在t时刻的测量值Zt与计算值的差值作为选择每个粒子权重的依据,差值越小,权重越大,反之权重越小。

权重函数定义为

| $ \omega _t^i = {\rm{exp}}\left( { - \frac{1}{2} \cdot (Z_t^i - {Z_t})} \right) $ | (8) |

归一化权重:

| $ \omega _t^{(i)} = \frac{{\omega _t^i}}{{\sum\limits_{i = 1}^N {\omega _t^i} }} $ | (9) |

由于初始定义的位置是准确位置,所以在初始阶段粒子权重都相对较大,不需要频繁重采样,所以定义安全区域[-δ,δ],当粒子分散范围在安全区域内则不进行重采样,否则对权重大的粒子进行复制,去掉权值较小的粒子,防止粒子退化。

最后得到当前时刻的状态量概率分布,对所有状态量的粒子分别进行加权求和得到估计的当前时刻状态量。

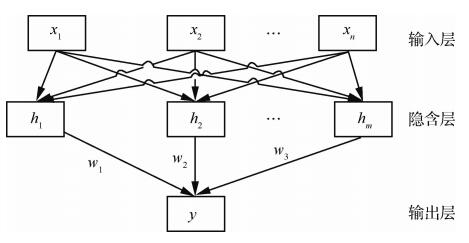

2 基于RBF神经网络误差补偿的室内组合导航系统 2.1 RBF神经网络模型RBF神经网络于1988年提出,相比于其他神经网络,其具有良好的泛化能力,网络结构相对简单,避免了冗长的计算。RBF神经网络包含3层:输入层、隐含层和输出层,其中隐含层的神经元激活函数由径向基函数构成[25-26]。RBF神经网络的结构如图 2所示。

|

| 图 2 RBF神经网络模型结构 Fig. 2 Structure of RBF neural network model |

在RBF网络中,X={xi}(i=1, 2, …, n)为网络的输入,网络隐含层输出为H={hj}(j=1, 2, …, m),hj为隐含层第j个神经元的输出

| $ {h_j} = \exp \left( { - \frac{{{{(x - {c_j})}^2}}}{{2b_j^2}}} \right) $ | (10) |

式中: cj是第j个神经元高斯基函数中心点坐标;bj为第j个神经元高斯基函数的宽度。

RBF网络的权值为

| $ w = \{ {w_1},{w_2}, \cdots ,{w_m}\} $ | (11) |

RBF网络输出为

| $ y = {w_1}{h_1} + {w_2}{h_2} + \cdots + {w_m}{h_m} $ | (12) |

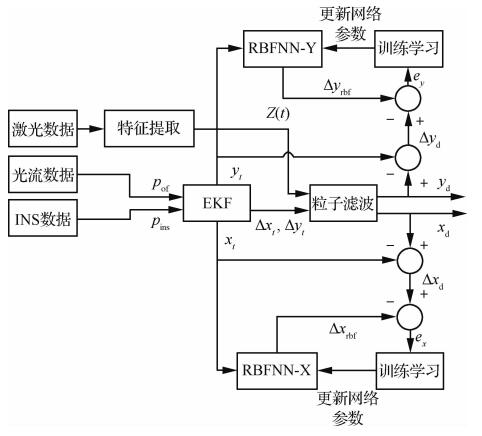

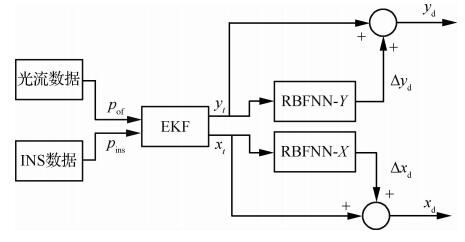

基于RBF神经网络误差补偿的组合导航系统结构如图 3和图 4所示, 其主要分为学习模式和预测模式,同时为了避免各个通道间的交叉耦合,提高神经网络训练速度,在实际系统中采用多RBF神经网络并行结构[27-28],具体将系统拆分为横纵坐标两个通道(x、y通道),用RBFNN-X和RBFNN-Y表示。

|

| 图 3 基于RBFNN数据融合并行结构学习模式系统框图 Fig. 3 Block diagram of parallel structure learning mode system based on RBFNN data fusion |

|

| 图 4 基于RBFNN数据融合并行结构预测模式系统框图 Fig. 4 Block diagram of parallel structure prediction mode system based on RBFNN data fusion |

当通过激光数据得到的环境特征可靠时,进入神经网络学习模式。此时将由EKF滤波得到的t时刻的位置估计pt={xt, yt}分别作为RBF神经网络的输入,粒子滤波前后的位置误差Δpd={Δxd, Δyd}分别作为RBF神经网络的参考输出,进行RBF神经网络的训练学习。当激光传感器遇到强反光环境、环境特征变化等原因导致激光数据不可用时,系统进入预测模式,通过训练好的RBF神经网络对误差进行预测补偿,以此达到有效提高数据融合可靠性的目的[29-32]。其中,Δxd、Δyd分别为x、y通道粒子滤波前后位置的误差量,Δprbf={Δxrbf, Δyrbf}分别为各通道RBF神经网络预测的粒子滤波前后位置的误差量,ep={ex, ey}为总的样本误差。

2.3 RBF神经网络训练方法由于受到机体机动性能以及飞行环境等不确定因素的影响,采用离线学习模式得到的神经网络模型预测误差较大,无法满足导航系统的性能要求,所以针对EKF滤波得到的最新的学习数据,对RBF神经网络进行在线训练学习。采用窗口采集法,对窗口内的训练数据进行实时更新,以保证模型的时效性和飞行器控制系统的实时性[33]。

对于图 3中RBF神经网络训练部分,设Δpd为理想输出,输出误差为

| $ e = \Delta {p_{\rm{d}}} - \Delta {p_{{\rm{rbf}}}} $ | (13) |

训练样本误差指标为

| $ E = {(\Delta {p_{\rm{d}}} - \Delta {p_{{\rm{rbf}}}})^2} $ | (14) |

当误差指标不满足要求时,即该指标大于设定的门限值,对权值wj、高斯基函数中心cj、高斯基函数宽度bj根据梯度下降法得到调整量Δwj、Δcj、Δbj,并且根据式(15)更新神经网络参数。

| $ \left\{ {\begin{array}{*{20}{l}} {{w_j}(k) = {w_j}(k - 1) + \Delta {w_j}(k) + \alpha \Delta {w_j}(k - 1)}\\ {{c_j}(k) = {c_j}(k - 1) + \Delta {c_j}(k) + \beta \Delta {c_j}(k - 1)}\\ {{b_j}(k) = {b_j}(k - 1) + \Delta {b_j}(k) + \gamma \Delta {b_j}(k - 1)} \end{array}} \right. $ | (15) |

式中: α、β、γ为动量因子;防止学习率过大时导致的权重震荡;k为迭代次数。

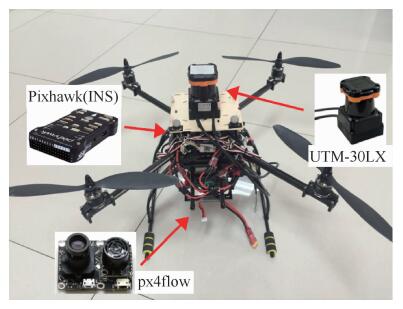

3 实验结果 3.1 定点实验结果为了验证算法的有效性,利用四旋翼无人飞行器平台进行了大量的飞行实验。实验设备如图 5所示。其中主要的控制系统及INS导航模块全部集中在Pixhawk中,px4flow光流传感器为EKF提供光流数据,二维激光扫描仪通过搭载的协处理器为粒子滤波算法提供环境特征信息。传感器的具体精度参数如表 1所示。

|

| 图 5 实验平台及主要传感器 Fig. 5 Experimental platform and main sensors |

| 传感器 | 精度参数 | 数值 |

| INS | 位置精度/m | >5 |

| 速度精度/(m·s-1) | 10 | |

| px4flow 光流传感器 |

光流运算速度/Hz | 120 |

| 最大感应角速率/((°)·s-1) | 2 000 | |

| 最大数据更新速度/Hz | 780 | |

| UTM-30LX 二维激光 扫描仪 |

测量范围/m | 0.1~30; Max.60 m (270°) |

| 测量精度/m | (0.1~10):±0.03; (10~30):±0.05 |

|

| 角度分辨率/(°) | 0.25 |

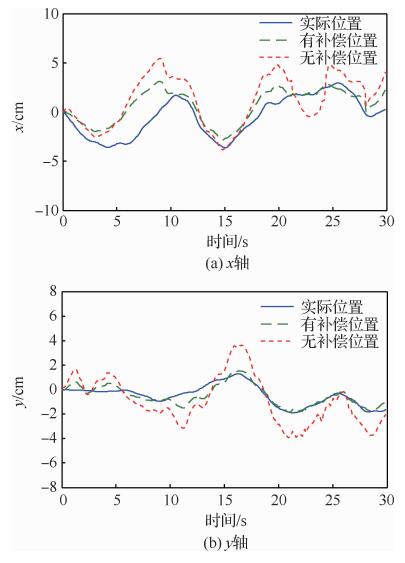

实验过程中,首先通过协处理器实现基于神经网络预测补偿的数据融合算法,并且在定点飞行状态下,改变环境特征,同时采集神经网络预测补偿前后的位置信息以及VICON(高精度光学运动捕捉系统)得到的实际位置信息。其中实验中所采用的环境特征为墙壁,即规则的直线和直角特征,环境特征改变主要是通过将原有的直线和直角特征进行数据屏蔽,以此来达成环境突变这一条件。进而对比补偿前后的位置信息与实际位置的误差值,以此验证定点状态下算法的有效性。进而对比补偿前后的位置信息与实际位置的误差值,以此验证定点状态下算法的有效性。图 6给出了x、y轴通道在补偿前后估计位置与实际位置的对比。

|

| 图 6 补偿前后位置与实际位置对比 Fig. 6 Comparison of position and actual position before and after compensation |

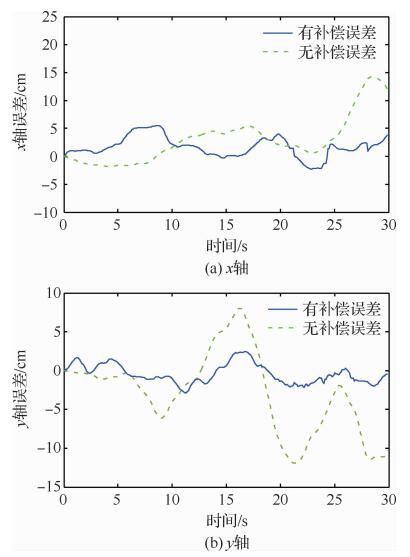

图 7对比了环境特征改变后30 s内x、y轴方向神经网络预测补偿前后的位置误差,从图中可以看出,补偿后的数据融合算法可以让位置误差稳定在一个可接受的范围内,而没有补偿的数据融合算法得到的位置信息误差很大。并且通过表 2可以得出,无论是x轴通道还是y轴通道,补偿后的位置信息都有更小的平均误差和误差极值。

|

| 图 7 补偿前后位置误差对比 Fig. 7 Comparison of position error before and after compensation |

| 类型 | x轴误差/cm | y轴误差/cm | ||

| 补偿前 | 补偿后 | 补偿前 | 补偿后 | |

| 平均误差 | 3.663 7 | 1.999 | 4.637 7 | 1.147 9 |

| 误差极值 | 14.270 2 | 5.503 5 | 11.910 5 | 2.865 6 |

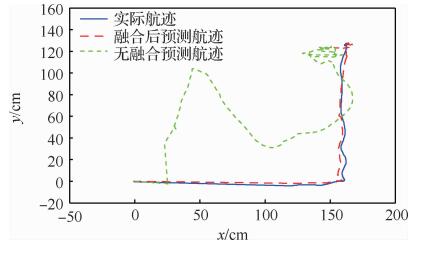

通过四旋翼无人飞行器的轨迹实验进一步说明了基于神经网络预测补偿的数据融合算法可以有效提高导航位置信息在环境特征变化时的可靠性。在轨迹实验中,控制四旋翼无人飞行器首先沿x轴方向飞行1.6 m,然后沿y轴方向飞行1.3 m,同时利用VICON记录实际的飞行轨迹,通过对比补偿前后算法得到的位置信息和实际位置进行对比,以此说明有补偿的数据融合算法的有效性。

通过预先在协处理器中进行设置,在起飞后10 s停止输出环境特征量。在图 8中同时对比了3种不同的轨迹,从图中可以直观地看出在环境特征改变时刻,无补偿的数据融合算法得到的位置信息由于掺杂了错误的环境特征信息迅速失准,而有补偿的数据融合算法得到的位置信息依然具有很好的可靠性。综合以上实验结果,可以得出RBF神经网络预测补偿的数据融合算法可以有效提高四旋翼无人飞行器在环境特征改变时导航系统的可靠性,保证飞行器在环境特征突变后的一段时间内依然可以保持可接受的导航精度。

|

| 图 8 补偿前后轨迹与实际轨迹对比 Fig. 8 Comparison of trajectory and actual trajectory before and after compensation |

针对EKF/PF组合导航系统在环境特征突变时位置导航信息会迅速失准问题,提出利用RBF神经网络对PF前后的位置误差进行预测补偿,当环境特征信息可靠时进行神经网络学习模式,环境特征改变时进行神经网络预测模式,对EKF估计得到的位置信息进行误差补偿。通过四旋翼无人飞行器的定点和轨迹实验说明了有补偿的数据融合算法可以有效提高环境特征变化时导航系统的可靠性,充分证明了提出方法的有效性和正确性。

当前无论在军事领域还是民用领域,技术可靠性都是功能实现的基础和保障,尤其针对战术武器和空间运动类的机器人,导航都是其功能实现的前提。随着信息化武器装备的日益发展,我国对于武器装备精确制导和精确打击的性能要求也在逐步提高,本文提出的组合导航系统,虽然目前只在无人飞行器上进行了验证,但相信经过不断地实验和技术调整,其对当今信息化武器装备的发展将会具有一定的推动作用。

| [1] |

吴显亮, 石宗英, 钟宜生. 无人机视觉导航研究综述[J]. 系统仿真学报, 2010, 22(S1): 62-65. WU X L, SHI Z Y, ZHONG Y S. Review of UAV visual navigation research[J]. Journal of System Simulation, 2010, 22(S1): 62-65. (in Chinese) |

| Cited By in Cnki (87) | Click to display the text | |

| [2] | HOW J P, BEHIHKE B, FRANK A, et al. Real-time indoor autonomous vehicle test environment[J]. IEEE Control Systems, 2008, 28(2): 51-64. |

| Click to display the text | |

| [3] | BACHRACH A, PRENTICE S, HE R, et al. RANGE-Robust autonomous navigation in GPS-denied environments[J]. Journal of Field Robotics, 2011, 28(5): 644-666. |

| Click to display the text | |

| [4] | TOURNIER G, VALENTI M, HOW J, et al. Estimation and control of a quadrotor vehicle using monocular vision and moire patterns[C]//AIAA Guidance, Navigation and Control Conference and Exhibit. Reston: AIAA, 2006: 21-24. |

| [5] | RONDON E, GARCIA-CARRILLO L R, FANTONI I. Vision-based altitude, position and speed regulation of a quadrotor rotorcraft[C]//2010 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 2010: 628-633. |

| [6] | BAZIN J C, KWEON I, DEMONCEAUX C, et al. UAV attitude estimation by vanishing points in catadioptric images[C]//2008 IEEE International Conference on Robotics and Automation, 2008: 2743-2749. |

| [7] | KANADE T, AMIDI O, KE Q. Real-time and 3D vision for autonomous small and micro air vehicles[C]//43rd IEEE Conference on Decision and Control, 2014, 2: 1655-1662. |

| [8] |

童丸丸.用于UAV导航的合成视觉方法[D].杭州: 浙江大学, 2010. TONG W W. Synthetic vision method for UAV navigation[D]. Hangzhou: Zhejiang University, 2010(in Chinese). |

| [9] | STOWERS J, BAINBRIDGE-SMITH A, HAYES M, et al. Optical flow for heading estimation of a quadrotor helicopter[J]. International Journal of Micro Air Vehicles, 2009, 1(4): 229-239. |

| Click to display the text | |

| [10] | VERVELD M J, CHU Q P, WAGTER C D, et al. Optic flow based state estimation for an indoor micro air vehicle[M]. 2012. |

| [11] | SOBERS D, CHOWDHARY G, JOHNSON E N. Indoor navigation for unmanned aerial vehicles[M]. 2009. |

| [12] | SALAZAR S, ROMERO H, GOMEZ J, et al. Real-time stereo visual servoing control of an UAV having eight-rotors[C]//20096th International Conference on Electrical Engineering, Computing Science and Automatic Control, 2009: 1-11. |

| [13] | YU H, BEARD R, BYME J. Vision-based navigation frame mapping and planning for collision avoidance for miniature air vehicles[J]. Control Engineering Practice, 2012, 18(7): 824-836. |

| Click to display the text | |

| [14] | AHRENS S, LEVINE D, ANDREWS G, et al. Vision-based guidance and control of a hovering vehicle in unknown, GPS-denied environments[C]//2009 IEEE International Conference on Robotics and Automation, 2009: 2643-2648. |

| [15] | CHITRAKARAN V K, DAWSON D M, CHEN J, et al. Vision Assisted Autonomous Landing of an Unmanned Aerial Vehicle[C]//44th IEEE Conference on Decision and Control, 2005: 1465-1470. |

| [16] | BILLS C, CHEN J, SAXENA A. Autonomous MAV flight in indoor environments using single image perspective cues[C]//2012 IEEE international conference on Robotics and automation (ICRA), 2012: 5776-5783. |

| [17] | GREEN W E, OH P Y, BARROWS G. Flying insect inspired vision for autonomous aerial robot maneuvers in near-earth environments[C]//2014 IEEE International Conference on Robotics and Automation, 2014, 3: 2347-2352. |

| [18] | SUN S L, DENG Z L. Multi-sensor optimal information fusion Kalman filter[J]. Automatica, 2014, 40(6): 1017-1023. |

| Click to display the text | |

| [19] | SUN S. Multi-sensor optimal information fusion Kalman filters with applications[J]. Aerospace Science and Technology, 2004, 8(1): 57-62. |

| Click to display the text | |

| [20] | OLFATI-SABER R. Distributed Kalman filtering for sensor networks[C]//200746th IEEE Conference on Decision and Control, 2007: 5492-5498. |

| [21] | OLFATI-SABER R, JALALKAMALI P. Collaborative target tracking using distributed Kalman filtering on mobile sensor networks[C]//2011 American Control Conference, 2011: 1100-1105. |

| [22] |

黄小平, 王岩, 缪鹏程. 粒子滤波原理及应用[M]. 北京: 电子工业出版社, 2017. HUANG X P, WANG Y, MIAO P C. Particle filtering principle and application[M]. Beijing: Electronic Industry Press, 2017. (in Chinese) |

| [23] |

王尔申. 基于广义回归神经网络的粒子滤波算法研究[J]. 沈阳航空航天大学学报, 2014, 31(6): 54-58. WANG E S. Research on particle filter algorithm based on generalized regression neural network[J]. Journal of Shenyang University of Aeronautics and Astronautics, 2014, 31(6): 54-58. (in Chinese) |

| Cited By in Cnki (6) | Click to display the text | |

| [24] |

刘金琨. RBF神经网络自适应控制[M]. 北京: 清华大学出版社, 2014: 1. LIU J K. RBF neural network adaptive control[M]. Beijing: Tsinghua University Press, 2014: 1. (in Chinese) |

| [25] |

王尔申, 李兴凯, 张芝贤, 等. 基于广义回归神经网络的粒子滤波算法研究[J]. 沈阳航空航天大学学报, 2014, 31(6): 54-58. WANG E S, LI X K, ZHANG Z X, et al. Research on particle filter algorithm based on generalized regression neural network[J]. Journal of Shenyang University of Aeronautics and Astronautics, 2014, 31(6): 54-58. (in Chinese) |

| Cited By in Cnki (6) | Click to display the text | |

| [26] | WANG X, JIANG A G, WANG S. Distributed sensor networks for multi-sensor data fusion in intelligent maintenance[C]//3rd International Symposium on Instrumentation Science and Technology, 2004: 587-592. |

| [27] | WANG F, CUI J Q, CHEN B M, et al. A comprehensive UAV indoor navigation system based on vision optical flow and laser FastSLAM[J]. Acta Automatica Sinica, 2013, 39(11): 1889-1899. |

| Click to display the text | |

| [28] |

杭义军, 刘建业, 李荣冰, 等. 基于混合特征匹配的微惯性/激光雷达组合导航方法[J]. 航空学报, 2014, 35(9): 2583-2592. HANG Y J, LIU J Y, LI R B, et al. MEMS IMU/LADAR integrated navigation method based on mixed feature match[J]. Acta Aeronautica et Astronautica Sinica, 2014, 35(9): 2583-2592. (in Chinese) |

| Cited By in Cnki | Click to display the text | |

| [29] | KONG T H, FANG Z, LI P. Indoor integrated navigation of micro aerial vehicle based on radar-scanner and inertial navigation system[J]. Control Theory & Applications, 2014, 31(5): 607-613. |

| Click to display the text | |

| [30] | BAR-SHALOM Y. On the track-to-track correlation problem[J]. IEEE Transactions on Automatic Control, 1981, 26(2): 571-572. |

| Click to display the text | |

| [31] | CARLSON N A. Federated square root filter for decentralized parallel processors[J]. IEEE Transactions on Aerospace and Electronic Systems, 1990, 26(3): 517-525. |

| Click to display the text | |

| [32] | ABDULHAFIZ W A, KHAMIS A. Bayesian approach to multisensor data fusion with pre-and post-filtering[C]//201310th IEEE International Conference on Networking, Sensing and Control (ICNSC), 2013: 373-378. |

| [33] | CHEN Z, CAI Y. Fata fusion algorithm for multi-sensor dynamic system based on interacting multiple model[J]. Journal of Shanghai Jiaotong University (Science), 2015, 20(3): 265-272. |

| Click to display the text |